Load-balancing

- Project name: Load-balancing

- Class: 4IT496 (WS 2015/2016)

- Author: Bc. Patrik Tomášek

- Model type: Discrete-event simulation

- Software used: SimProcess, trial version

Contents

Problem definition

A hosting company with its own infrastructure is using so called "load balancing" to distribute the overall load between multiple servers (hardware nodes) and “high-availability” to minimize service down-time.

Load-balancing

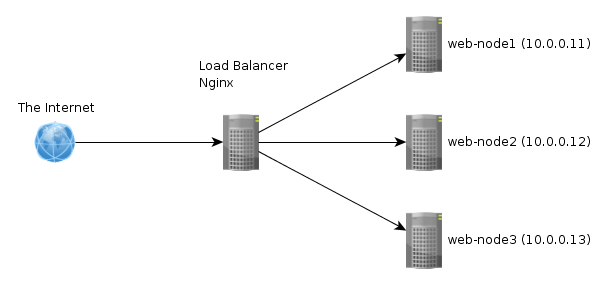

In the case of web hosting service it means dividing the incoming request between multiple devices (called nodes) based on a set of rules (priority, weight, etc). The simulation is based on nginx (a webserver software) load balancing.

Note: In the picture the load-balacer isn't redundant, therefore HA isn't enabled. The simulation has two load-balances present.

High-avaibility

Ensures that a system or component is operational for desirable time. The solution necessary to provide web hosting consists of many parts, where all of them need to be on-line for the whole to be operational.

To enable HA a provider can use failover and backups. Failover is basically a backup piece of hardware, which ensures that when a component goes off-line another takes it's place. After that it's necessary to load the backup on the component that took over. If there is a SAN (storage area network) implemented than there is no need to load a backup, because failover just uses the same data from one central storage, which is used for all the server nodes. In the simulation a SAN is implemented and failover is taken into consideration.

Anti-DoS/DDoS

Denial-of-service (DoS) attack is an incident is witch the targeted service goes down. Distributed denial-of-service means, that more than one system is used to attack a single target. There are more means of possible protection against such attack. Setting up a decent firewall rules might be a good place to start, but it isn't so effective as implementing a device, which can mitigate the attack. A Radware defencePro device is implemented in the simulation.

The goal of the simulation is to find the optimal number of server and other components necessary to enable the mentioned functions (LB, HA, Anti-DoS).

Method

Discrete event simulation can be solved using other software than SimProcess, however graphical interface was preferred. It is more user friendly to use GUI to create the simulation than typing it in code.

There are some limitations present due to use of a trial revision, but this simulation doesn't reached them.

All the prices in the simulation are in the default currency, which is USD.

At first I wanted to due a simulation of whole day, however the default time unit is a second and the simulation took a lot of time. So there are three small time intervals simulated with the highest number of requests needed to be processed taken into consideration.

Model

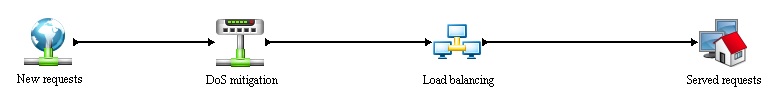

The simulation is divided into 4 main processes:

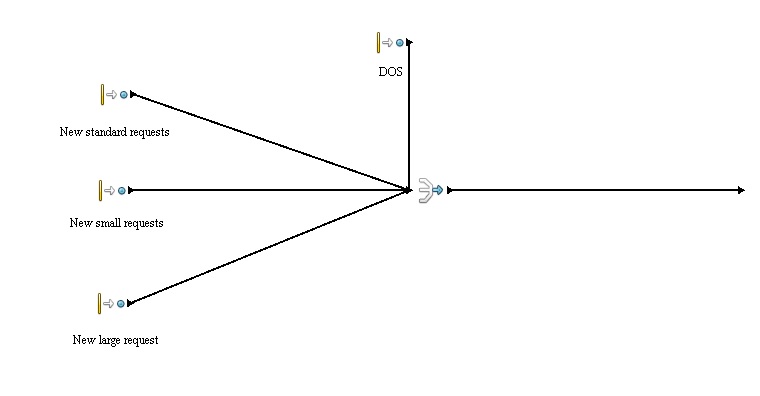

- New requests - generation of new requests, further information in Entities section.

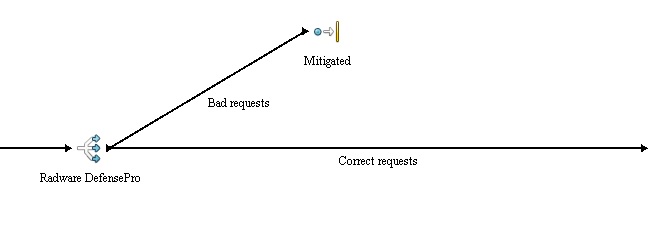

- DoS mitigation - implementation of anti-dos device.

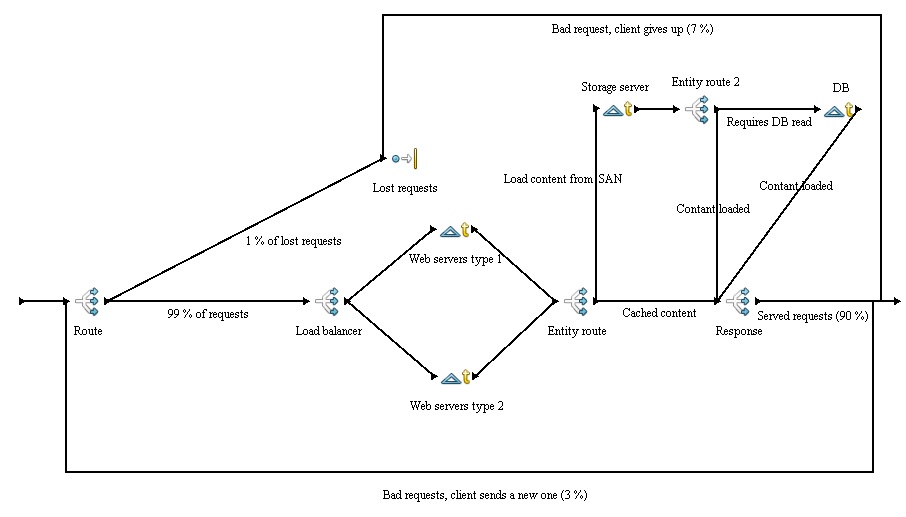

- Load balancing - main process, witch distributes the generated (incoming) request between resources.

- Served request - simple dispose of the generated requests.

The simulation is set to run in two 9 minutes iterations, using multiple schedules to apply the content changes of a web page (cached/un-cached content).

Generated request are the highest recorded numbers of the given hosting environment (so called peak). Other possibilities doesn't need to be taken into consideration because the system must be able to handle the peak and with owned hardware, there is not much use for downscaling. Besides the peak might come at odd hour, and starting up an off-line server takes a lot of time. It would be better to use the unallocated server usage for some other calculation, which might be beneficial for the provider.

Time unit used: seconds

Number of all requests: 9000 per second is the maximum recorded in the given environment. The number was divided by 100 for the simulation purposes (so it's 90).

Number of replications: 2

Entities

This is a list of all defined and used entities within the simulation.

Requests

The request data are based on real data from an hosting environment, that hosts multiple Magento e-shops (a rather complex system, which uses a lot of hardware).

The incoming request are categorised because Magento uses caching and indexing. It takes less time to server a cached content than un-cached one. Further more there are multiple schedules used to generate the requests. If new data have been added to the e-shop, that they need to be cached first, witch means more large requests.

- Small request

Request of a cached content.

- Standard request

Request of a content on storage server, no need to accesses database.

- Large request

Request of a content on storage server and database.

D-DoS

A large number of requests aiming to cripple the system.

Resources

This is only a brief summary of resources used, further information follows in processes section of this page.

When it comes to resource price, I take only lease into consideration. Of course it is also possible to purchase the given component. Cloud computing is also not considered.

Load-balancer

An important component described earlier on this page.

These devices are quite expensive. In case of lease, the price can be 300 USD monthly for a sufficient component for this simulation requirements.

Web server

There are two types of this resource, each with different capabilities:

Type 1 - a faster server that goes for 150 USD monthly

Type 2 - a slower server that goes for 100 USD monthly

Storage server SAN

In the simulation only SAN is used as a name of this resource.

The price of one SAN depends on the configuration (number of HDDs, etc.). A sufficient SAN can be leased for 220 USD monthly.

Database server

Database server is quite fast, so in the simulation only one is necessary, however to achieve HA even with DB server a second one should be implemented.

The price of one DB server is about 200 USD monthly.

Radware DefensePro

This is an anti-dos component implemented in the simulation.

The cost of this hardware is extreme, therefore partial lease is used.

The price depends on the internet connection of the provider, is this case it goes around 50 USD monthly.

Processes

New requests

In this process new requests are generated according to the following schedule:

Interval: 1 second

| Entity type | Most content cached | Standard content | New content added | Sum of normal requests | Addition DoS requests (red square) |

|---|---|---|---|---|---|

| New small request (green dot) | Poi(45) | Poi(25) | Poi(13.5) | Poi(90) | Poi(150) |

| New standard request (orange dot) | Poi(31.5) | Poi(45) | Poi(31.5) | Poi(90) | Poi(150) |

| New large request (black dot) | Poi(13.5) | Poi(20) | Poi(45) | Poi(90) | Poi(150) |

DoS mittigation

This is a simple process, which implements anti-dos device and ensures that the system is not effected by the attack.

The "Branch" activity simply lets forward only the correct request and the rest is disposed, thus the attack is mitigated.

Without this device implemented the system would get flooded as later illustrated in results section of this page.

Load balancing

This is the main process of the simulation. Firstly it is expected that about 1 % of incoming request are lost somewhere on the way, so that is what the "Route" activity does. The lost requests are simply disposed. Than the request arrives at Load-balancer, which divides them based on probability. It would be also possible to use least processing or something similar. The load-balancers are designed to handle extreme amount of requests and are very fast, therefore there is only as little delay as 1 ms in the simulation and event that is probably to high. Basically with the number of generated request in the simulation the LB can't be overloaded even in case of DoS attack. However it is a critical piece of hardware, because if it goes down, no request will reach the host, therefore it must be redundant to enable HA (so two LBs are required).

After this the request reach either Server of type 1 or type 2. Server type one is a delay of Nor(25,3) ms and server type 2 is a delay of Nor(40,3.0) ms, because it is slower. Upon the web server processes the request it is decided where the request goes next based on Entity.type. In case of a small request it goes straight to the next Branch "Response". The standard and large request are send to storage server. The storage server represents another delay an because the system uses SAN it is a common resource for all the web servers. The delay of SAN is defined as Nor(10.0,2.0) ms. Standard request is than send to "Response" activity by "Entity route 2" activity. Large requests are further more send to DB server (the same delay as in the case of storage server) by the same activity. After that they continue to "Response" activity. Branch activity "Response" serves the content to the client, but only 90 % of requests are correct, 7 % are incorrect and client gives up and 3 % are also incorrect but client send a new request.

A simple dispose of served requests.

Results

This section is divided into the following cases.

Case 1: Optimal number of servers with DoS attack mitigation present

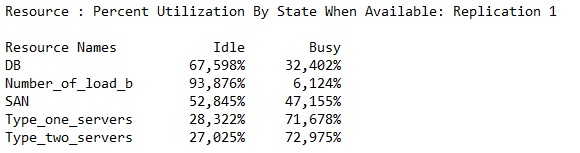

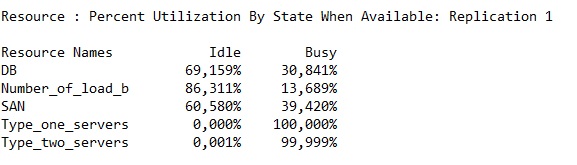

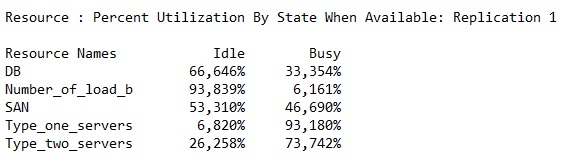

The simulation has shown that the optimal number of server necessary to process incoming request properly is 3 of type one and 2 of type two.

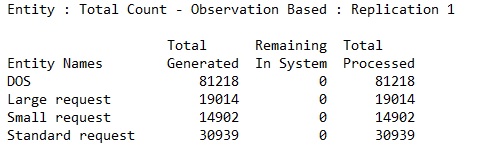

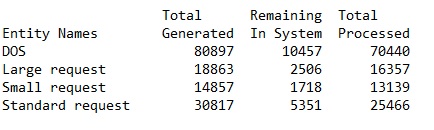

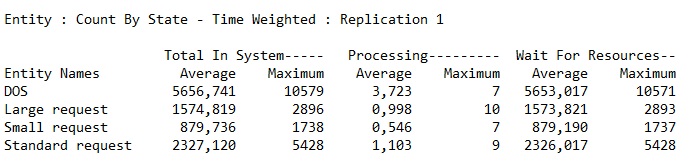

Shows the number of all generated and processed requests.

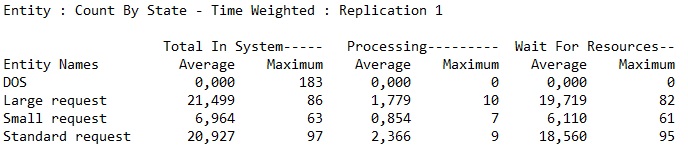

The wait time for resource is within the acceptable bounds. If it would be over 100 ms, some improvement should be done.

The HA must be considered, but in this case it is expected that no more than one server will do off-line in one time. The probability of more than one server going off-line at the same time is quite low. the number of servers could be raised to achieve better HA capabilities, but it wont speed up the process much and there is a reserve in current state.

The cost of this system configuration is:

Servers type one: 3x 150 USD

Servers type two: 2x 100 USD

Load-balancers: 2x 300 USD

SANs: 2x 220 USD

DB server: 1x 200 USD

Total monthly costs: 1890 USD

The entire solution is very expensive and it might be more cost effective to buy at least some of the devices. Web servers are relatively cheap, so I would start there.

Case 2: DoS attack with no mitigation

In this case the number of used resources is the same as in previous one, but the DoS mitigation devices is not present.

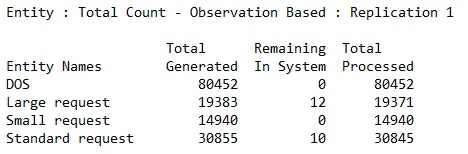

Shows the number of all generated and processed requests. In here it is quite visible that the system was flooded and wasn't able to process all the requests.

The wait time for resource is absolutely unacceptable, basically all the requests would time out before they would even start being processed.

All the web servers are flooded and cant handle the number of incoming requests.

This shows that a DoS attack cant crush the entire system so proper measures must be in place. As is illustrated in previous case.

Case 3: same as case 1 with one server down (type 1)

Some request were not processed, due to one server being off-line, but they would if the simulation would continue for a few more milliseconds.

This shows that even with one server down all the requests are processed and HA is functional.

One off-line server raised the "Type_one_servers" resource usage from 72 % to 93 %, which is still sufficient.

Conclusion

The simulation has identified the optimal number of web servers and other involved components. As expected the costs of such system are quite high.

Because the simulation was made using SimProcess, there are some limitations as to defining the resource limitations. In here it represents only the delays and number of units available. It would be better to represent the server capabilities by something else than a delay, however it is sufficient.

The simulation can be modified to find optimal number of servers and other components for a different number of requests than used. In this simulation the optimal numbers are: 3 web servers of type one, 2 web servery of type two, two load-balancers (only due to redundancy, otherwise one would be enough), two SANs (same reason), one DB. The overall monthly cost of such a system is about 1890 USD.

The simulation has also illustrated the effect of a DoS attack and a possible protection against it. The additional costs for such a protection depends on too many factors. However in some datacenters it is possible to lease a part of the device, that does the mitigation (it could start at 50 USD per month).

Code

Sources

http://searchnetworking.techtarget.com/definition/load-balancing

http://searchstorage.techtarget.com/definition/failover

http://searchsoftwarequality.techtarget.com/definition/denial-of-service

http://searchsecurity.techtarget.com/definition/distributed-denial-of-service-attack

http://cepa.io/devlog/secure-https-load-balancing-with-nginx