Efficiency of robotic vacuums at home

The objective of this research is to compare the efficiency of two types of robotic vacuum cleaners. The first type is a random algorithm of cleaning. The second type is a cleaner with an intelligent algorithm. The robotic vacuum cleaners are a part of IoT (Internet of Things) world which is gaining its popularity nowadays. The purpose of a robotic vacuum cleaner is being a servant for a human being and save his / her time by cleaning instead of him/her.

Contents

Problem definition

The first problem which has to be solved is to convert an algorithm of a real intelligent cleaner to the simulation environment. The intelligent cleaners use plenty of sensors gathering information about the real world. It is virtually impossible to convert it as it exactly is as companies have their algorithms under a protection of disclosure and many programmers work on it under constant development. Nonetheless, I tried to simplify it and emulate it by the very former principle.

The second problem is to compare the intelligent algorithm with an algorithm of random cleaner's movement in a home environment according to its efficiency. Efficiency is measured by an amount of dust at home (in a room) and in what time is it done.

Method

For purpose of this research is used NetLogo as a simulation tool. NetLogo has several advantages for modelling robot's behaviour in the environment. One of the biggest advantages is its visualisation capability and its interactive approach. Therefore, I chose it as the most appropriate tool for the simulation.

Model

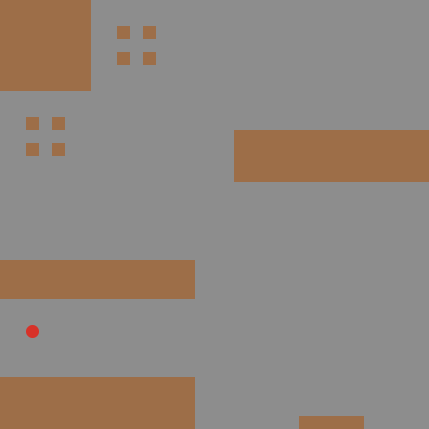

Environment (the room)

For realistic purposes, the environment is a room, more likely a living room, with obstacles as furniture occurring in an ordinary household. I laid out the obstacles in my best intention as I think the ordinary living room could look like. There is a table with chairs, couch, television and kitchen with a bar. The room has also walls at each side where cleaner cannot go through.

All obstacle patches are in a brown colour. A floor is white covered by grey dust. The floor is seen only after the dust is vacuumed.

Cleaner with random algorithm

The simulation starts with a random position of the cleaner with a random heading direction. If the cleaner hits the wall or an obstacle the cleaner bounces back in a random direction.

Cleaner with intelligent algorithm

Years ago, there was a thought that an insect is not smart, they just follow a simple set of rules. It set off a new wave of artificial intelligence and robotic vacuum cleaners are based on that as well. [1] It is called Boid created by Craig Reynolds in 1986. [2] Examples of that are even in NetLogo models library as Flocking or Ants. Although the most advanced cleaners have very complex algorithm nowadays, the Boid inspiration is sufficient in our case.

According to that, the cleaner is attracted by dust (grey patches) around him which is an equivalent to sensors knowing where is it located and what has been cleaned yet. Although the cleaner does not see the dust in the whole room because of obstacles - if there is only one left patch of dust right behind an obstacle and cleaner's visibility reaches the dust, the cleaner gets stuck. Therefore the visibility of the dust has to be limited to 3 patches in case an obstacle is 1 patch thick (the other patch is possible to have because of bounce effect).

If there is no dust around, the cleaner heads in a random direction. By this approach, it gets fastest to another area where possible dust is left. The same is used for the bounce effect (as the random cleaner).

Simulation

Buttons in the simulation are Setup and Start. Setup prepares the environment (room including furniture, dust and the cleaner). Start sets off the simulation by movement of the cleaner until the whole room is cleaned from dust.

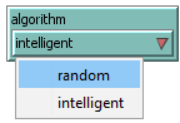

Unfortunately, the comparison between the two cleaners has to be separated in two simulations because of its dust visualisation. Only one cleaner can clean the room, otherwise, it would be misleading. It can be switched by a chooser.

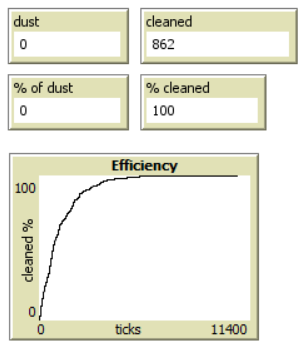

Statistics are represented by a percentage and absolute amount of dust and cleaned dust. The plot shows time (in ticks/moves of the cleaner) and percentage of cleaned dust.

Results

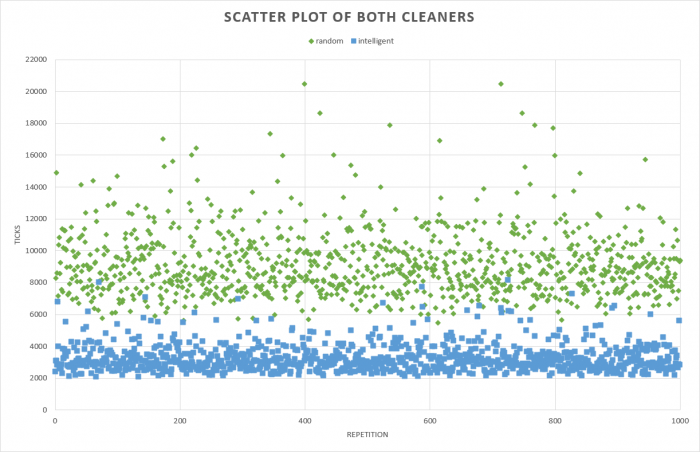

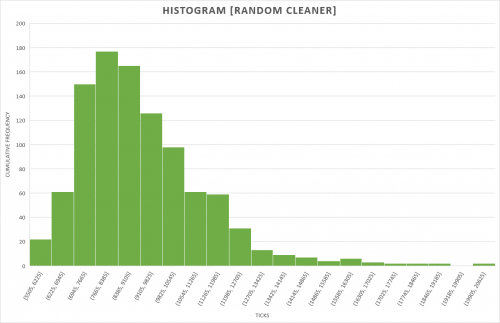

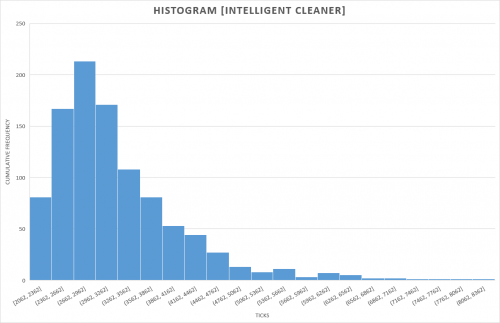

I used BehaviorSpace which is a part of NetLogo to capture the results. I used 1000 repetitions for each cleaner. The results are depicted by the scatter plot below (can be enlarged). The x-axis is a sequence of repetition and y-axis amount of ticks taken to clean the room. Sooner the cleaning was done, lower the green/blue dot is placed. The green dots are random cleaner and blue dots the intelligent one. As it is easy to see, the random cleaner was much slower. To see real values, I applied some basic descriptive statistic whose values are in the following table. According to all of that, the intelligent algorithm is almost three times faster than the random one, and thus, more efficient. It also proves that intelligent algorithm was programmed right. Additionally, I created histogram plots of both cleaners as an illustration of its distributed values.

The raw data are in attached file in section "Code".

| random | intelligent | |

|---|---|---|

| max | 20 470 | 8 119 |

| min | 5 505 | 2 062 |

| mean | 9 171 | 3 240 |

| median | 8 780 | 3 030 |

Conclusion

The first problem - creating the intelligent algorithm was done successfully in my opinion. None of the 1000 repetitions gets stuck and if it is close to it, the cleaner switches to the random approach which is partially the intelligence of that. And instead of running up and down in random direction, it has a known goal or rather is attracted to the dust and thus - collect all of it.

The simulation was made with 1000 repetitions of each type of the cleaner which should be sufficient amount to gain some implication. The results clearly show better efficiency of the intelligent robotic vacuum cleaner. If the median is considered as a representative sample of the efficiency of each cleaner, the intelligent one is 2,9x more efficient than the random one.

Code

File:VacuumCleaner-results.xlsx

Sources

https://www.cnet.com/news/appliance-science-how-robotic-vacuums-navigate/

https://en.wikipedia.org/wiki/Boids

http://electronics.howstuffworks.com/gadgets/home/robotic-vacuum2.htm