Multistage Games

Contents

Introduction

Normal-form games models a game where players choose their moves simultaneously without observing other players moves. Extensive-form games adds a possibility playing sequentially - allowing players to learn about the choices of previous players, so the player can condition his moves based on previous players decisions. Extensive-form games have their payoff expressed after the end of the game (one "grand game"). In reality, dynamic play over time may be more complex than one game that unfolds over time. Instead, players can play one game that is followed by another, or maybe even several other games.

- Should we consider each game independently, or should we expect players to consider the sequence of different games as one "grand game"?

- How to evaluate total payoffs from a sequence of payoffs in each of the sequentially played stage-games? What is the value of payoff in period N and in period N+20? If it was just a one-stage-game we could "solve" the game using Weak Perfect Bayesian Equilibrium...

- Will the players moves vary if the stage-game were not played sequentially but as independent games?

- Will a player vary from the stage-game Nash equilibrium to a different action, which will result him a higher payoff in the following stage-games?

Definitions

Multi-stage game

A definition of multi-stage game by S. Tadelis is following: A multi-stage game is a finite sequence of stage-games, each one being a game of complete but imperfect information (a simultaneous move game). These games are played sequentially by the same players, and the total payoffs from the sequence of games will be evaluated using the sequence of outcomes in the games that were played. We adopt the convention that each game is played in a distinct period, so that game 1 is played in period 1, game 2 in period 2, and so on. We will also assume that after each stage is completed, all the players observe the outcome of that stage, and that this information structure is common knowledge. [1]

Multi-stage game consists of multiple games. In each game, players have a set of action they choose from, and the profiles of actions lead to payoffs for that specific game, which is then followed by another game and another, until sequence of games is over. Once we realize that one game follows another, this implies that players can observe the outcomes of each game before another game is played. This observation is important because it allows players to condition their future actions on past outcomes. This is the idea at the center of multi-stage games: the ability to condition behavior may lead to a rich set of outcomes. In what follows, we will analyze the idea of conditional strategies, and the equilibrium that can be supported using such strategies.[1]

Other sources use term Multi-stage game with a wider meaning including also games with Perfect Information [2] which is in contrary to the [1]. Following material uses definition of S. Tadelis, which is mentioned above.

Information Set

Information Set is collection of decision nodes such that:

- When the play reaches a node in the information set, the player with the move does not know which node in the information set has been reached.

- The player has the same set of choices at each node in the information set.

Information Set can be used in extensive-form games to view a subgame with simultaneous moves of the players. If the player doesn't have the same set of choices at each node it is not an Information set. The point is that for the player its unknown and undecidable know at what node we are. Why is Information Set used? Because it allows us to view a complicated multi-stage game in a familiar tree view, which is also used in Dynamic Games.

Conditional strategies

If a multistage game consists of N games and we break up the games into N individual games and take it as an individual game. And if the players won't try to link them in any way, then the strategies would be pretty simple - each game is treated independently and each player chooses its best strategy to get the highest payoff in the respective stage game.

But in case of a multi-stage game players may want to link the individual games and create a strategy that will yield the best payoff. A simple example of linking different games can be following - If you play a game of chess with me now, I'll go with you to a cinema. If not, I won't go with you. Which generally means using strategies in form of "if A happens in games 1,2... n-1 then I will choose this action in game N". A simple way to explain conditional strategies is to take one of the below examples and write all extensive forms of strategies - "I will play F in the first game and I will play L in the second game only if player 2 played M in the first game. If player 2 played F in the first game then I will play G in the second."

To decide which Strategy would be the best to play for both of the players - similar to finding equilibrium like Nash equilibrium, we would have to introduce Sequential Equilibrium. [3] Sequential Equilibrium does specify a strategy for each player and a belief for each of the players. A belief gives, for each information set of the game belonging to the player, a probability distribution on nodes in the information set (see Bayesian Equilibrium).

Payoffs

How to evaluate total payoffs from all of the N stage-games? Is it a simple sum of all of the payoffs from all the stage-games? There is a well defined notion from economic analysis (and standard cost-benefit analysis) of present value which should be used to calculate the total payoff. [1]

It is easy to justify the assumption that payoffs obtained in earlier stage-game are worth more than payoffs obtained in later stage-games. Take in consideration financial markets - if a game played today yield a payment of 20 and another is played in a year and it will also yield 20, then today's value of the second payment will be worth less than 20. Say there is a 10% interest rate at which we can borrow money then we can borrow 20 today and repay 22 in a year, which is the future payoff from the game. So the 22 next year is worth exactly 20 today. Alternatively you can use analogy with discount, which you should be definitely familiar with. Another way to justify the assumption of discounting, or impatience, is that today's game is played now and its not 100% certain that the next will be played. For example if two players are playing a game in period one, there may be some probability that tomorrow's game won't be played. Then the payoff of the first game is more reliable and has a higher momentary value independent from the occurrence of the second game, because you have to take in account the probability of not-playing the game, ergo not getting the money.

For more detailed information about calculating the Total Payoff using discounted sum of payoffs that the player expects to get in the sequence of all stage-games see the [1]. But the idea is pretty simple - The payoffs in one period away are discounted once, two periods away are discounted twice and so on.

Subgame Perfect Equilibria

Since multi-stage games are dynamic in nature, and the past play is revealed over time, it is natural to turn to Subgame Perfect Equilibrium (SPE) as a solution concept. In particular, rational players should play sequentially rational strategies, which justifies the concept of SPE, and because total payoffs are defined above we are able to use it.

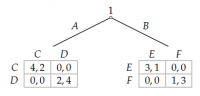

Example of a simple two-stage game of two players from [1] is shown on the side, number of possible pure strategies is 32x32 = 1024! If we cannot relay on playing sequentially rational strategies we should turn to the Sequential Equilibrium mentioned above.

Example - Extensive game with simultaneous moves[4]

The subgame following player 1’s choice of A has two Nash equilibria, (C, C) and (D, D); the subgame following player 1’s choice of B also has two Nash equilibria, (E, E) and (F, F). If the equilibrium reached after player 1 chooses A is (C, C), then regardless of the equilibrium reached after she chooses (E, E), she chooses A at the beginning of the game. If the equilibrium reached after player 1 chooses A is (D, D) and the equilibrium reached after she chooses B is (F, F), she chooses A at the beginning of the game. If the equilibrium reached after player 1 chooses A is (D, D) and the equilibrium reached after she chooses B is (E, E), she chooses B at the beginning of the game.

Thus the game has four subgame perfect equilibria: (ACE, CE), (ACF, CF), (ADF, DF), and (BDE, DE) (where the first component of player 1’s strategy is her choice at the start of the game, the second component is her action after she chooses A, and the third component is her action after she chooses B, and the first component of player 2’s strategy is her action after player 1 chooses A at the start of the game and the second component is her action after player 1 chooses B at the start of the game).

In the first two equilibria the outcome is that player 1 chooses A and then both players choose C, in the third equilibrium the outcome is that player 1 chooses A and then both players choose D, and in the last equilibrium the outcome is that player 1 chooses B and then both players choose E.

Questions

- What is the difference between Multi-stage game and Repeated game?

- Is the total payoff of a multi-stage game a sum of all payoffs of all stage games?

- What is Information Set?

- How is Information Set relevant to Information completeness in extensive-form game.

- Is there an easy way "to solve" Multi-stage game?

- Name the characteristics of a Multi-stage game by S. Tadelis.

References

- ↑ 1.0 1.1 1.2 1.3 1.4 1.5 Tadelis, Steve. Game Theory: An Introduction. Princeton: Princeton UP, 2013. Print.

- ↑ Rosu, Ioanid. "Multi-Stage Game Theory in Continous Time." Ioanid Rosu - Research. HEC Paris, 1 Jan. 2006. Web. 2 Feb. 2014.

- ↑ David M. Kreps and Robert Wilson. Sequential Equilibria, Econometrica 50:863-894, 1982.

- ↑ 4.0 4.1 Osborne, Martin J. An Introduction to Game Theory. New York: Oxford UP, 2004. Print.