Randomness tests

When dealing with random values and their sources (often commonly referred-to as random number generators, RNGs), it is important to know what behavior we may expect from used generators. To ensure the used RNG implementations meet certain levels of required quality (randomness), one can carry out various tests specifically designed to verify such properties (sometimes called ‘randomness tests’). In the worse-case scenarios, use of an inappropriate randomization source may have fatal consequences for the systems involved – ranging from simulation results being completely irrelevant to serious security vulnerabilities because of cryptographic weaknesses.

Contents

Introduction

In the previous chapters of this textbook, we have explained what pseudorandom number generators (PRNGs) are, their areas of application and why is it both difficult and important for computers to generate truly random numbers (or at least try to). Even when using quality seed sources (which may also include various truly random values), as long we are using deterministic algorithms to generate the output values, the best result we can get is just pseudo-random. However, given such algorithm or, in general, any other (possibly black-boxed or unknown) value source, we might want to analyze and measure the actual ‘randomness’ of their output, and in consequence also the quality of the considered source.

Definition

Because the requirements for such source will greatly differ, we should first start off by defining what a ‘random value’ means in our context of system simulations. To consider a set of values random, we usually should be able to state that independently for every single item, the probability of it having a certain value should be equal to probability of having any other value (or at least for it to appear so to a certain degree). In terms of our random number generators, this would mean we usually will require that the probability of occurrence of all possible values will correspond to a certain probability distribution, usually the uniform distribution (this is the case for most PRNGs). In consequence, the resulting set of values should be absent of any detectable, non-random patterns.

The problem with testing randomness is that given any (pseudo-random-generated) data, one can never truly determine their real randomness - and can only make a good guess at best (on a lighter note - there is a Dilbert comic strip humorously commenting this fact [2]). Although we cannot determine whether the true randomness of data, there is a number of options available to measure and quantify how much random the generated values appear to be - or to be more precise, how well the values fit the aforementioned 'randomness' specifications. Whether a generator passes such test is then only a matter of the limits of what we accept as sufficiently random - either the test results fall into our considered ranges and the generator succeeds, or they do not and it fails (and these criteria will differ for different PRNG use scenarios). To carry out most of those tests, we usually will operate on a set of data of specific fixed length - for obvious reasons, we cannot test an infinitely long (or generally too long, for that matter) dataset, meaning the values will usually be only a part of the random sequences a generator can produce - yet it still should be long enough (or the test should get repeated enough times) to 1) statistically eliminate the effect of patterns that will always occur in any shorter sequences by a chance and 2) to reveal possible algorithm deficiencies that might occur over longer periods of values (like recurring patterns after a full cycle of all values of a PRNG).

Tests

One of the first authors to discuss PRNG randomness testing was Donald Knuth in his book The Art of Computer Programming: Volume 2: Seminumerical Algorithms (1969) [1], presenting a complete battery of statistical tests mostly operating on empirical data of the generators. This battery of test was later adapted and extended by George Marsaglia in 1996 in his Diehard Battery of Tests of Randomness, also accompanied by test implementations in ANSI C language [2].

Some of the most common tests included in those batteries are described in following chapters.

Equidistribution test

One of the discussed requirements that a sequence must meet in order to be considered random is the uniform distribution of values in it. To verify that assumption, we can verify the frequency of every possible value in the result set and compare it with an expected probability distribution (i.e. uniform distribution). Generally speaking, there are two common methods that may be used to achieve this, the first being the Kolmogorov–Smirnov test. An alternative approach to this is using the (Pearson's) Chi-squared test, which is usable if we consider a smaller group of discrete values.

Because the equidistribution of single values itself is only a part of the requirements ensuring randomness, Knuth [1] also suggests extending this method to testing the frequency of pairs or other longer specific subsequences in the resulting sequence as a whole. Every of the combinations in one of those considered groups should be 'uniformly distributed in an independent manner'.

Autocorrelation

Autocorrelation (also known as serial correlation) is a term in statistics describing a correlation between values of a function at different points in time or different elements in a value sequence. As proposed by Knuth [1], for every single value, we compute the serial correlation coefficient between the value and a value preceding it. However, it is also be possible to calculate and measure many other variants of this coefficient for other consecutive elements. Truly random sequences should tend to have a smaller autocorrelation coefficient between its elements.

Given consecutive values Y1, Y2, ..., YN and lag k, the autocorrelation coefficient is defined as follows:

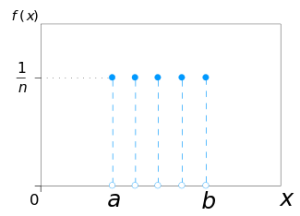

Run tests

The idea behind this kind of test is to count the "runs up" and "runs down" in the output sequence. A "run up" is an ascending subsequence of numbers, where for every element ![]() , and conversely

, and conversely ![]() for descending "run downs". The counts of these runs and their length should adhere to a certain probability distribution. Wald–Wolfowitz runs test is a specific case of this test targeting sequences of binary values. [4]

for descending "run downs". The counts of these runs and their length should adhere to a certain probability distribution. Wald–Wolfowitz runs test is a specific case of this test targeting sequences of binary values. [4]

Poker test

Poker test, as the name inspired by the classic card game suggests, is observing the number of matching values in a number of groups of five elements. Based on the amount of distinct values (cards), we make a Chi-squared test comparing the counts with an expected probability distribution [1].

Spectral test

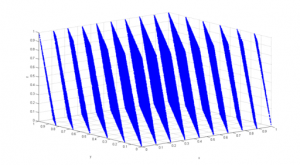

Unlike the most of previously mentioned tests, the spectral test operates on a full cycle (period) of a PRNG, more specifically a linear congruential generator (LCG, a type of PRNG) [3]. This test examines the lattice structures of those LCG-generated values by projecting values of every n consecutive elements onto n (theoretical) dimensions and measures their random distribution [5] [6] [7].

Simple visual analysis

As Bo Allen [4] or Mads of Random.org [5] suggest, a lot of obvious patterns can be easily spotted if we visualize the output of a generator. Although this does not substitute a full mathematical analysis, it can give a quick insight into the possible deficiencies of many PRNGs. On the illustrations below we can see a black/white output visualization of two PRNG functions in PHP language, first being a simple LCG, second being a more sophisticated Mersenne-Twister generator, inspired by an article by Bo Allen [4].

Other tests

Among other commonly suggested tests we may also mention the Monte Carlo PI value estimation, simple arithmetic mean or information entropy calculation (which may also be easily tested by simply compressing the considered data using any well known compression software and compare the compression ratios) [6], gap test, coupon-collector test, tests using Walsh-Hadamard transform [7], birthday spacing test (measuring distances between randomly chosen points on a large interval) and many other similar tests [2].

Recommended reading

- Testing Random Number Generators - Jerry Dwyer and K.B. Williams, 1996

- Testing Random Number Generators, Part 2 - Jerry Dwyer and K.B. Williams, 1996

- Diehard Battery of Tests of Randomness - The Marsaglia Random Number CDROM

- ENT - A Pseudorandom Number Sequence Test Program

- Randomness Tests: A Literature Survey

References

- ↑ 1.0 1.1 1.2 1.3 KNUTH, Donald Ervin. The art of computer programming. 3rd ed. Reading: Addison-Wesley Publishing Company, 1998, xiii, 762 s. Classic work newly updated and revised. ISBN 0-201-89684-2.

- ↑ 2.0 2.1 The Marsaglia Random Number CDROM including the Diehard Battery of Tests of Randomness. Florida State University Department of Statistics [online]. [2016-01-24]. Available at: http://stat.fsu.edu/pub/diehard/

- ↑ WILLIAMS, Williams a Jerry DWYER. Testing Random Number Generators, Part 2. Dr. Dobb's Journal [online]. 1996 [2016-01-25]. Available at: http://drdobbs.com/184403208

- ↑ 4.0 4.1 ALLEN, Bo. Pseudo-Random vs. True Random: A Simple Visual Example. Bo Allen [online]. [2016-01-25]. Available at: http://boallen.com/random-numbers.html

- ↑ MADS, Haahr. Statistical Analysis. Random.org [online]. [2016-01-25]. Available at: https://www.random.org/analysis/

- ↑ ENT. Pseudorandom Number Sequence Test Program [online]. [cit. 2016-01-25]. Available at: http://www.fourmilab.ch/random/

- ↑ Feldman, F. 1987. Fast Spectral Tests for Measuring Nonrandomness and the DES. Advances in Cryptology -- CRYPTO '87. 243-254. Springer-Verlag.